Current brief

LLM comparison

A trust-first read on where OpenAI, Anthropic, and Gemini each fit best.

Brief summary

OpenAI is still the broadest default for teams that want one vendor across chat, coding, search, computer use, and execution, and GPT-5.5 materially strengthens that case. Claude Opus 4.7 remains the sharpest trust signal for code-heavy agents, careful review, document reasoning, high-resolution vision, and long-running workflows. Gemini is most compelling when long context, Google ecosystem leverage, search grounding, and cost discipline matter more than mindshare.

Why this comparison belongs here

Trust in AI platforms is not just about intelligence. It is about cost profile, operating consistency, context handling, migration burden, safety posture, and whether leadership changes across model generations feel stable or chaotic.

The current OpenAI and Anthropic releases both change the trust read. GPT-5.5 raises OpenAI's ceiling for terminal work, computer use, knowledge work, and long-context tasks while increasing flagship API pricing. Opus 4.7 keeps the same premium Opus price, adds stronger coding and agent behavior, introduces high-resolution image handling, and forces production teams to review model IDs, effort controls, token behavior, and prompt harnesses.

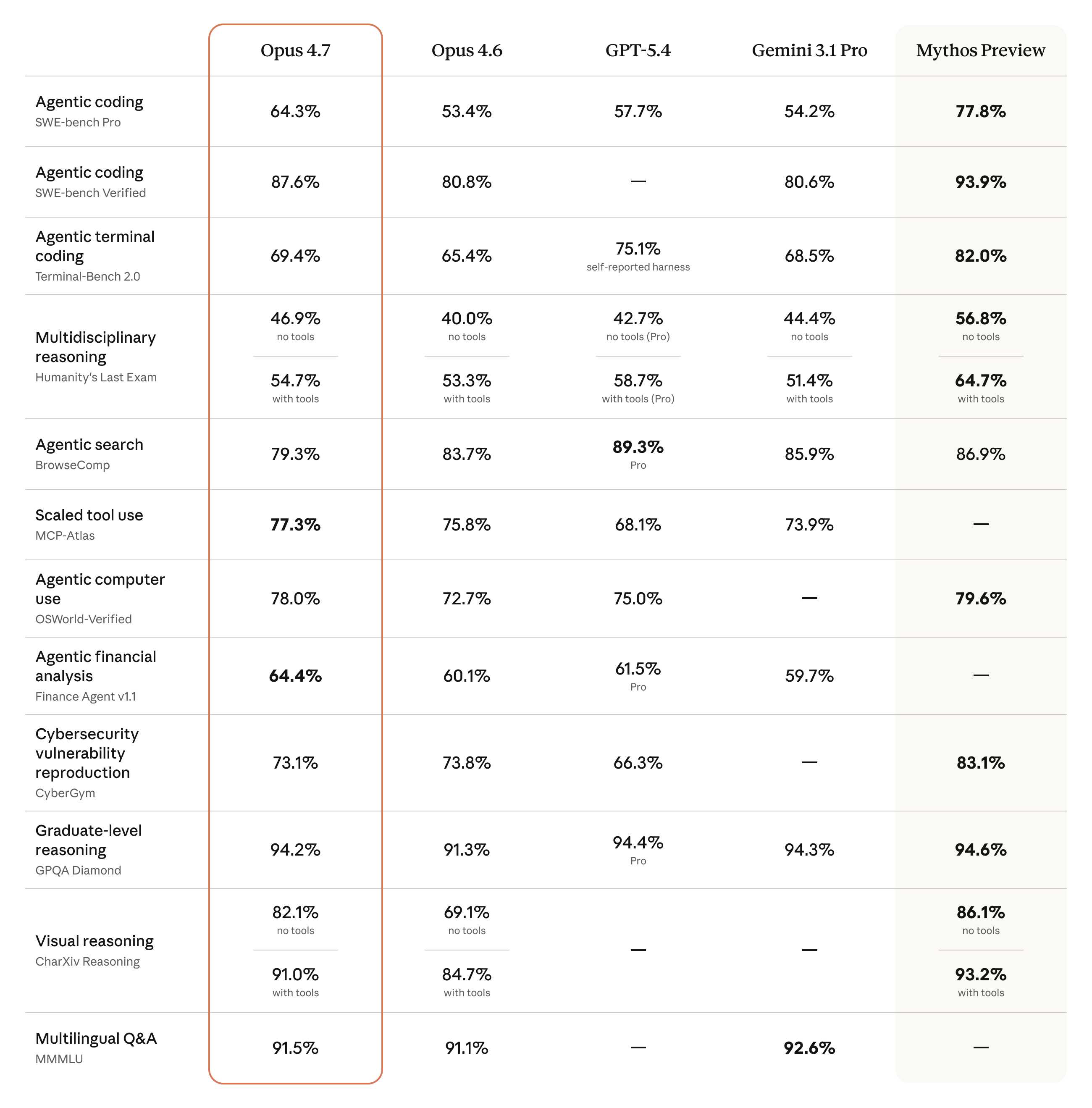

What the current frontier snapshot says

The newest official benchmark and pricing sources sketch a fairly crisp division of labor.

- Claude Opus 4.7 gives Anthropic the stronger public SWE-bench Pro story and a persuasive trust case for long-running coding, review, document, vision, and agent workflows.

- GPT-5.5 gives OpenAI the stronger current signal for Terminal-Bench 2.0, OSWorld-Verified, BrowseComp, GDPval, and general platform breadth.

- Gemini 3.1 Pro remains the cleanest Google-native choice when long context, search grounding, Vertex routing, multimodality, and cost-sensitive production economics drive the architecture.

- All three are close enough that real workflows matter more than leaderboard rankings.

Current benchmark read after GPT-5.5 and Claude Opus 4.7

The trust question is not which logo wins. It is whether the model is predictably strong in the exact operating mode you will ship.

| Area | Best current signal | Trust implication |

|---|---|---|

| Agentic coding | Opus 4.7 posts 64.3% on SWE-bench Pro and 87.6% on SWE-bench Verified. GPT-5.5 posts 58.6% on SWE-bench Pro, up from GPT-5.4 at 57.7%. | Claude is still the cleaner public code-repair bet on SWE-bench Pro, but GPT-5.5 narrows the gap while strengthening the surrounding agent platform. |

| Terminal coding | GPT-5.5 posts 82.7% on Terminal-Bench 2.0, ahead of GPT-5.4 at 75.1%, Opus 4.7 at 69.4%, and Gemini 3.1 Pro at 68.5% in OpenAI's comparison table. | OpenAI deserves first evaluation for CLI-heavy developer agents, shell workflows, and tool-rich automation. |

| Knowledge work | OpenAI reports GPT-5.5 at 84.9% on GDPval wins-or-ties, ahead of GPT-5.4 at 83.0%, Opus 4.7 at 80.3%, and Gemini 3.1 Pro at 67.3%. | OpenAI has the strongest current all-around knowledge-work signal, while Claude remains highly compelling for careful review and long-running agent work. |

| Document and visual reasoning | OpenAI's table puts GPT-5.5 at 54.1% on OfficeQA Pro and Opus 4.7 at 43.6%, while Anthropic reports a major Opus 4.7 lift over Opus 4.6 on its document-reasoning material. | Trust-sensitive document work should be tested on the buyer's own files. The vendor tables disagree enough that local evaluation matters more than a headline score. |

| Search and browse | GPT-5.5 Pro posts 90.1% on BrowseComp, GPT-5.4 Pro posts 89.3%, Gemini 3.1 Pro posts 85.9%, GPT-5.5 posts 84.4%, and Opus 4.7 posts 79.3%. | Search-heavy assistants should keep OpenAI and Gemini in the routing pool even if Anthropic is the default for reasoning, review, or code. |

| Graduate-level reasoning | GPT-5.5 posts 93.6% on GPQA Diamond; GPT-5.4 Pro is 94.4%, Gemini 3.1 Pro is 94.3%, and Opus 4.7 is 94.2%. | At the frontier, differences can be too small to dominate procurement. Governance, latency, tooling, data paths, and review burden decide the safer choice. |

Why older generations still matter

Model selection is not static, and earlier generations shape buyer trust more than one benchmark cycle does.

- OpenAI built its advantage on broad adoption and all-rounder utility.

- Anthropic built trust through coding strength, long-context reliability, and a more organic assistant feel for many users.

- Google's Gemini line kept closing gaps while leveraging massive context windows, multimodality, and ecosystem reach.

Creative writing is a revealing trust test

Creative writing still matters because it exposes tone, judgment, and overfitting to style prompts. But for an enterprise trust report, it should sit below operational evidence: code execution quality, document reasoning, tool use, computer use, latency, token behavior, and whether the migration path creates production risk.

A trust-oriented buyer should therefore ask not 'which model wins?' but 'which failure mode can I live with?' Generic output, tool underuse, scope drift, price premium, browsing weakness, token changes, or context tradeoffs each matter differently by workload.

No single model dominates. GPT-5.5 strengthens OpenAI's case for terminal agents, knowledge work, computer use, and broad platform adoption. Claude Opus 4.7 strengthens Anthropic's case for high-trust coding, agent review, documents, and long-running work. Gemini 3.1 Pro remains the Google-native long-context and grounding candidate.

Current provider benchmark tables and model docs

Reference shelf

A short set of current references behind the trust lens: model benchmarks, platform pricing, ecosystem docs, and implementation architecture.

Primary current source for OpenAI's GPT-5.5 benchmark table, agentic coding, computer-use, knowledge-work, pricing, and safety positioning.

Primary benchmark and release source for Opus 4.7, including coding, document reasoning, vision, memory, and computer-use signals.

Useful for evaluating the broader OpenAI platform surface, tool pricing, and current flagship model economics.

Current Google source for Gemini 3.1 Pro's harder-reasoning positioning and rollout across API, Vertex AI, Gemini app, NotebookLM, CLI, Antigravity, and Android Studio.

Adjacent systems reference on why enterprise AI reliability depends on data flow, policy controls, logging, model access, and workflow design.